The rapid evolution of generative models and automated tutoring systems has fundamentally altered the classroom dynamic, yet the gap between high-tech institutions and under-resourced schools continues to widen across the country. As educational leaders witness a transformation in how knowledge is consumed and produced, the urgency for a centralized, “sector-blind” national pilot program has moved from a theoretical debate to a practical necessity. Recent findings suggest that while some elite private academies are already integrating advanced predictive analytics to track student wellbeing, remote and lower-socioeconomic schools often struggle with basic connectivity or a lack of teacher training in digital literacy. This disparity does not merely affect current grades; it threatens to create a permanent class of students who are ill-equipped for a workforce that assumes AI fluency as a baseline requirement. Without a coordinated federal intervention, the promise of technology as a great equalizer may instead become the primary driver of a two-tiered educational system that leaves the most vulnerable populations behind.

Navigating the Dualities of Technological Integration

Balancing Individual Progress with Cognitive Autonomy

The deployment of artificial intelligence in schools presents a complex trade-off between hyper-personalized learning pathways and the preservation of critical thinking skills. Proponents of these systems point to the ability of AI to act as a twenty-four-hour tutor, providing immediate feedback and scaffolding for students who might otherwise fall through the cracks of a standardized curriculum. This individualized approach allows educators to meet students exactly where they are, tailoring the difficulty of tasks to match their specific cognitive needs and pacing. When implemented correctly, these tools can demystify complex subjects like advanced mathematics or linguistics by breaking them down into digestible, interactive modules that adapt in real-time. This level of customization was previously impossible in a traditional classroom setting, where a single teacher must manage the diverse needs of thirty or more children simultaneously.

However, a growing consensus among researchers warns of the dangers of “cognitive off-loading,” where students rely so heavily on automated assistance that they fail to develop essential problem-solving muscles. If a machine provides the answer or even the structure of an argument before a student has struggled with the concept, the neurological pathways associated with deep learning may not fully form. This concern is particularly acute in humanities and social sciences, where the nuance of human judgment is easily mimicked by sophisticated language models. The challenge for a national strategy lies in establishing pedagogical guardrails that ensure AI serves as a “co-pilot” rather than a replacement for intellectual effort. Educational frameworks must prioritize the development of “prompt engineering” and critical evaluation, teaching students how to interrogate the output of an algorithm rather than accepting it as an absolute or unbiased truth.

Addressing Privacy and Algorithmic Bias in the Classroom

As schools become increasingly reliant on data-driven decision-making, the risks associated with data privacy and systemic bias move to the forefront of the policy discussion. Every interaction a student has with an AI platform generates a digital footprint that, if not properly protected, could be exploited or mishandled, leading to long-term consequences for a minor’s digital identity. A national pilot program must establish rigorous standards for how educational data is collected, stored, and shared, ensuring that private corporations do not have unfettered access to sensitive student information. Beyond privacy, there is the persistent issue of bias within the training data of the AI models themselves. If these systems are built on datasets that reflect historical inequalities, they may inadvertently replicate those prejudices, steering students from marginalized backgrounds away from certain opportunities or misinterpreting their academic potential based on flawed cultural assumptions.

To mitigate these risks, a unified national framework must mandate transparency from technology providers and require regular audits of the algorithms used in public and independent schools. This involves moving away from “black box” technologies where the decision-making process is hidden from both teachers and parents. By centering equity as a guiding principle, a national strategy can ensure that AI tools are designed with diverse perspectives in mind, including localized adaptations for regional communities and Indigenous populations. For instance, AI can be leveraged to preserve and revitalize Indigenous languages by creating immersive digital resources that connect students to their heritage while simultaneously teaching modern technical skills. Ensuring that these high-quality, culturally sensitive tools are available regardless of a school’s geographic location or funding status is the only way to prevent technology from exacerbating existing social divides.

Strategic Frameworks for National Implementation

Cultivating Teacher-Led Excellence and Professional Development

The success of any national AI strategy depends less on the software itself and more on the capability of educators to integrate it meaningfully into their daily instruction. Currently, the landscape of teacher readiness is highly fragmented, with some staff members pioneering innovative uses of AI while others feel overwhelmed by the pace of change. A national pilot program must prioritize investment in comprehensive professional development that goes beyond basic technical training. Teachers need to understand the ethical implications of AI, how to use dashboards to monitor student wellbeing, and how to redesign assessments to remain relevant in an era of ubiquitous automation. By positioning the teacher as the primary architect of the AI-enhanced classroom, the government can ensure that technology supports rather than replaces the vital human connection that lies at the heart of effective pedagogy.

Building on this foundation, professional development should be structured as a collaborative, ongoing process rather than a one-off seminar. Establishing networks of “pockets of excellence” allows high-performing schools to share their successful strategies and curricula with those just beginning their transition. This peer-to-peer learning model helps to demystify the technology and provides practical, classroom-tested examples of how AI can reduce administrative burdens, such as grading routine assignments or organizing schedules. When teachers are freed from these repetitive tasks, they can devote more time to high-impact activities like small-group mentoring and creative project-based learning. A national strategy that focuses on empowering educators ensures that AI becomes a tool for professional enhancement, fostering a culture where technology and human expertise work in tandem to elevate student outcomes across the entire school system.

Transforming Assessment and Evidence-Based Policy

The introduction of AI into the educational ecosystem necessitates a fundamental rethink of how academic achievement is measured and validated. Traditional methods of assessment, such as take-home essays or standardized multiple-choice tests, are increasingly vulnerable to AI interference, making them less reliable indicators of a student’s true mastery. A national pilot program provides the perfect sandbox for testing new, “AI-proof” assessment models that prioritize process over product. These might include oral defenses, live demonstrations, or collaborative projects where the use of AI is explicitly documented and evaluated. By shifting the focus to how a student uses technology to solve complex problems, schools can prepare learners for a professional world where the ability to collaborate with machines is a critical competency. This evolution in testing ensures that the educational system remains rigorous and relevant in a rapidly changing world.

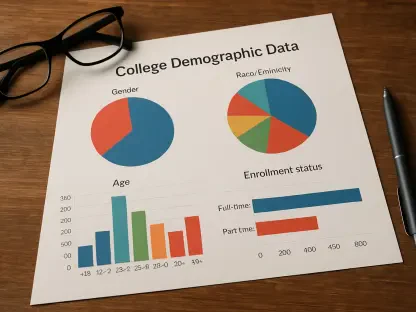

Furthermore, a large-scale national pilot program serves as a critical engine for generating the data needed to inform long-term evidence-based policy. By tracking the impact of various AI tools across different demographics and regions, policymakers can identify which interventions yield the greatest improvements in literacy, numeracy, and student engagement. This data-driven approach allows for the strategic allocation of resources, ensuring that future funding is directed toward technologies that have a proven track record of closing the achievement gap. Rather than relying on the marketing claims of tech companies, a unified national strategy enables the government to set high standards for safety, efficacy, and inclusivity. This rigorous evaluation process will be essential for building public trust and ensuring that the integration of AI into schools remains a deliberate, transparent, and beneficial endeavor for every student in the nation.

Future Considerations for Educational Resilience

The implementation of a coordinated national AI strategy was a necessary evolution in response to the growing disparity between disparate educational environments. By moving away from fragmented, localized trials and toward a unified framework, the focus shifted from mere technological adoption to the creation of an equitable learning landscape. This transition required a significant investment in teacher training, ensuring that educators were not just users of technology but leaders of its ethical application. Furthermore, the emphasis on evidence-based policy allowed for the continuous refinement of AI tools, filtering out ineffective or biased software in favor of platforms that truly enhanced student outcomes. The success of this initiative depended on a commitment to transparency and a refusal to allow geographic or economic factors to dictate a child’s access to the most advanced tools available.

Looking ahead, the path toward a fully integrated AI educational system requires a sustained focus on cross-sector collaboration and adaptive governance. Educational authorities must remain vigilant against the rapid evolution of these technologies, establishing a permanent advisory body that includes teachers, parents, data ethicists, and students. This collaborative approach will be vital for addressing emerging challenges such as deepfakes, automated misinformation, and the long-term psychological effects of human-AI interaction. Schools should move toward a model where digital literacy is not a separate subject but a foundational element of every discipline, preparing students to be critical, ethical, and creative participants in a high-tech society. By treating the national AI strategy as a living, evolving roadmap, the educational system can ensure it remains resilient, inclusive, and capable of fostering the next generation of thinkers and innovators.